IXI glasses: adaptive optics and a new optician‑led distribution model

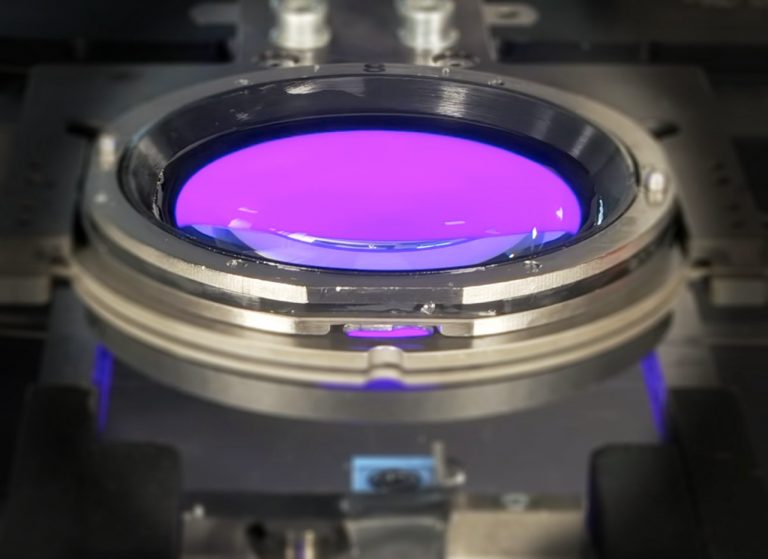

Progressive lenses completely reimagined: Finnish start-up IXI is working on electronically controlled glasses that dynamically switch between near, intermediate and far ranges. Together with a large French optician group, preparations are now underway for market entry.